port bootstrap build-ordering tool report 3

Timestamp:

Tags:

blog

A copy of this post is sent to soc-coordination@lists.alioth.debian.org as well as to debian-bootstrap@lists.mister-muffin.de.

Diary

June 18

Pietro suggests a faster way to generate installation sets for a list of packages. In my case, I need an installation set for every source package in the archive to find out how often a binary package is needed to build a source package. As a result, the speed is doubled in contrast to the original approach.

June 19

- adapt code to work with new dose release 3.0

- remove unneeded parts of code

- add different possibilities to find amount of source packages that need a binary package

- add code to get multiple installation sets using Depsolver_int.solve

June 20

- add ~global_constraints:false to Depsolver.listcheck, Depsolver.trim and Depsolver.edos_install calls

- adapt output graph to limited xdot capabilities

June 21

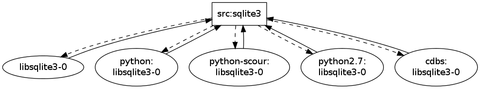

I formulate an email to the list, reporting of dependency graphs of debhelper, cdbs, pkg-config and libgtk2.0-dev. My current technique gets an installation set for a source package, removes all those that are already installable and adds the others as a dependency of that source package. This dependency will include an installation set of that binary as well minus all packages that are already available. The problem with that approach are dependency cycles created by long dependency chains. Example: src:A needs B needs C needs A. B and C would both be added as a dependency of src:A. B as well as C would also include their installation set which in both cases includes A. So now there are two cycles: src:A->B->A and src:A->C->A. For a real life example, look at the following situation of cdbs and src:sqlite3.

It is created because src:sqlite3 needs cdbs needs python-scour needs python needs python2.7 needs libsqlite3-0. Therfor libsqlite3-0 is in the installation set of cdbs, python-scour, python and python2.7. This creates five cycles in the graph even though there is only one. It would be better to reduce the dependencies added to src:sqlite3 to its direct dependency which is cdbs.

Package dependencies are disjunctions from which the solver chooses one or the other to build an installation set. To solve the problem above I would need to know which disjunction the solver chose and then only add the direct dependency of a package to the dependency graph.

- improve build_compile_rounds performance

- big overhaul of menu structure

- fix subgraph extraction code

June 22

- do not create a universe if not needed - use hashtables instead

- for sorting packages, generating difference of package sets and otherwise comparing packages, always use CudfAdd.compare

- as a custom list membership function, use List.exists instead of trying List.find

- more speedup for build_compile_rounds

- the number of source packages that can be built does NOT include the cross built packages

- print closure members in graph

- refactor code and move common functions to bootstrapCommon.ml

- add breakcycles.ml for future code to break cycles using staged build dependencies

- use more extlib functionality

- extended package list input format

June 23

After several emails with Pietro I learn about syntactic dependency graphs. To document my progress and prove my efforts I committed the code as commit 6684c13. But this code was soon found out to be unecessary so it will be removed later and just serves as documentation.

June 24

I came up with another (better?) solution to get the chosen disjunctions. It simply uses the calculated installation set to decide for each disjunction which one was taken by the solver. I reported that important step and the open questions involved with it in an email to the list. The problem always was, that an installation set can easily contain more than one package of a disjunction. In this case it is not clear which branch was chosen by the solver. I found, that in Ubuntu Natty there are only 6 such packages and for each of them the situation can be solved. It can be solved because in all of those cases it is that either one package of a disjunction provides the other or that both packages depend upon each other, which means that both have to be included.

June 27

- use installation set to flatten build dependencies of source packages

- refactor code and move common functions to bootstrapCommon.ml

June 25

I have to have an algorithm that finds all circuits in a given graph. This is necessary so that:

- cycles can be enumerated for huge dependency graphs where cycles are hard to see

- cycles can be enumerated to find a cycle that can be broken using staged build dependencies

It seems that Johnson’s algorithm is the best way to do this complexity wise and Pietro already blogged about the problem together with an implementation of the algorithm in ocaml. Unfortunately it turns out that his code doesnt implement the algorithm correctly and hence misses out on some cycles. The fix seems not to be too trivial so I’m still investigating it.

June 28

- add crosseverything.ml to obtain a list of source packages that, if cross compiled, would make the whole archive available

Results

While the first week was productive as usual, I had to work some time on a University project during the second week as well as attend a family meeting. I will catch up with the lost time over the course of the next week.

dose3

Using dose 3.0 (which fixes a bug about essential packages) the output of the algorithms is now likely less wrong then before.

performance

Performance was improved in the generation of installation sets as well as in the code that tries out how many packages can be built in multiple rounds. This was achieved by more caching, less unnecessary operations in looping constructs, replacing lists with hashtables, not creating universes where not necessary.

user interface

The main program, basenocycles.ml now has a much better menu structure.

input format

The programs take two package file inputs. The list of source packages that has to be cross built for a minimal build system and the list of source packages that was chosen to be cross compiled in addition to that. Both files list one source package per line and now allow comments.

refactoring

As more and more scripts are added, more and more functionality is moved to bootstrapCommon.ml which makes each script much cleaner.

what to test for cross building

As discussed in the “Future” section of the last report, I now automated the process of finding out which packages, if they were cross compiled, would make the whole archive available because they break all cycles and allow native compilation of the rest. The outcome: to build 3333 out of 3339 packages in natty natively, at most 186 source packages must be cross compiled. The other six cannot be compiled because of version mismatches in the Natty Sources.bz2 and Packages.bz2. The code can be run from crosseverything.ml.

limit source dependencies to direct dependencies

Reducing the dependencies of source packages from their full installation set to their direct dependencies by finding out which disjunction of their dependency list were taken, greatly simplifies the dependency graphs. The dependency graph created for libgtk2.0-dev could be reduced from 491 to 247 vertices for a depth of three.

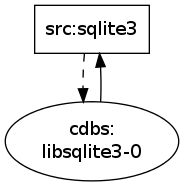

For cdbs it is now clearly visible that cdbs depends on libsqlite3-0 which builds from src:sqlite3 which depends on cdbs.

Before:

After:

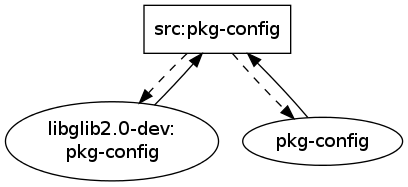

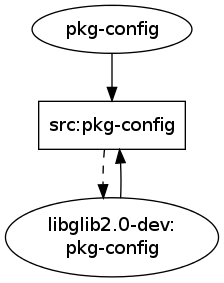

For pkg-config the graph also has been reduced to the one single cycle that matters: src:pkg-config needs libglib2.0-dev which depends on pkg-config which builds from src:pkg-config.

Before:

After:

Future

I will prepare content for wookey’s debconf talk on crossbuilding and bootstrapping. As this will include directions how to use the current code, I will kill two birds with one stone and write some proper documentation for my current source.

The following two lists will be displayed after a dependency graph is calculated and reduced to its scc:

- those source packages that have the least build dependencies not fulfilled. Those might be candidates for easy staged build dependencies. Since the source package is part of the scc, it will definitely be involved in some cycle somewhere.

- those binary packages that most source packages depend upon. Those could be candidates for cross compilation as it might be easier to cross compile the source package than using staged build dependencies.

Patrick managed to cross build packages with sbuild now. So the list of packages that crosseverything.ml produces can now be checked efficiently for cross buildability. With this list, potentially more cycles can be broken out of the box. A feature will be added that allows the user to remove all packages from a dependency graph that can be cross compiled without any additional effort.

Version mismatches between source and binary packages in Sources.bz2 and Packages.bz2 respectively in Ubuntu make the scripts fail and/or produce wrong results. Debian (even Sid) doesnt have this problem so I should find out where to report this problem to Ubuntu.

I need to write a working version of Johnson’s algorithm because much functionality depends upon it. I have the option to improve Pietro’s version or write one from scratch. Writing one from scratch might be easier as I have Pietro’s code as template as well as a Java implementation of Johnson’s algorithm which seems to work.

The following functionalities need working cycle enumeration:

- given source packages with staged build dependencies, an enumeration of cycles is needed to find out which cycles can be broken by building packages staged. It makes less sense to blindly build a package stage and then check if this makes more packages available.

- display cycles of a dependency graph to the user. After obtaining all cycles in the graph it makes sense to sort them by their length. The user would then investigate the situation of the smallest cycles first. This makes sense because breaking small cycles can potentially break bigger cycles. Since in the end, all cycles have to be eliminated anyway, it makes sense for the user to first tackle the small ones.

- display the feedback arc set to the user. The packages in the feedback arc set might be very good candidates for reduced build dependencies or cross compilation.